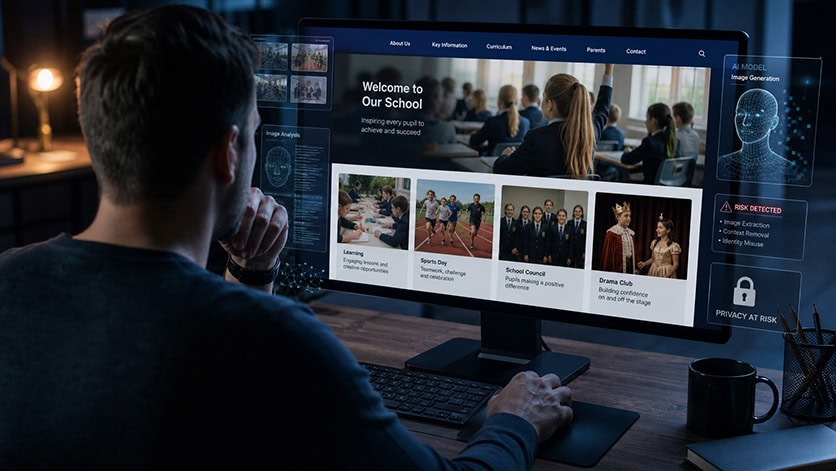

Should schools still publish photos of pupils online?

It’s a question that’s been going round and round in my head, and one that many schools are now beginning to ask.

Due to the growing concerns of generative AI being used to create indecent images (and increasingly videos) of children, plus the increasing reports of ‘normal’ images being repurposed using AI, should we remove all pupil (and possibly staff) images from the school website? After all, billions of images are shared online on a daily basis, so is this overly cautious, even scaremongering?

Although I’m talking about the school website here it’s important to note that this would extend to anywhere images are published therefore the real question is this:

“Could an innocent image of a child/young person/member of staff published in good faith be misused?”

The answer is an obvious yes, so does that mean that schools should stop publishing photos? No, not necessarily. But at the very least the risk needs to be considered by senior leaders, governors and trustees. Doing nothing is not an option.

What’s the problem?

Schools have always used photos (and to a lesser degree videos) to celebrate achievements and promote school life. Smiling pupils and staff, sports days, the school council, drama performances and much more. They may obtain consent to publish using consent forms/opt-out processes, much more so post-GDPR. Which raises another question, in the age of AI do parents (and pupils where applicable) have a good understanding to be able to make an informed choice?

What do we know?

The Internet Watch Foundation reported in March 2026 that, in 2025, it identified 8,029 AI-generated images and videos of realistic child sexual abuse. That represented a 14% increase in criminal AI content on the previous year. They also reported a dramatic increase in AI-generated child sexual abuse videos, from 13 in 2024 to 3,443 in 2025, with 65% of those videos classified as Category A, the most severe legal category.

UNICEF, ECPAT and INTERPOL have also highlighted the scale of the issue. In a study across 11 countries, at least 1.2 million children disclosed that their images had been manipulated into sexually explicit deepfakes in the previous year. In some countries, this represented around 1 in 25 children.

We already know that publicly available images can be copied, scraped/harvested, downloaded or used in ways that were never intended by the person who published them, and this includes school websites.

But more specifically what we’re talking about here is where pupils or staff are being targeted.

Where a face can be placed into a different scene, where clothing can be altered or removed. What once required a good level of knowledge within Photoshop or similar software is no more.

Many people refer to this as “deepfakes”, but that word can sound technical and detached. For victims, this is not a technical issue. It can be humiliating, frightening and deeply traumatic. In many cases victims may never even know that an image has been created or shared. Most media coverage of AI image abuse tends to focus on public figures such as celebrities and politicians. It can give the false impression that ordinary people are unlikely to be targeted, but that isn’t reality.

The motivations for targeting an individual are numerous, e.g.:

- Bullying.

- Humiliation.

- Revenge.

- Coercion and blackmail (including financially motivated sexual extortion).

- Sexualisation.

Schools are reporting incidents where images of pupils or staff have been taken, altered and shared in peer groups. In some cases this has involved images taken in school. In others, innocent images have been taken from social media and repurposed. You don’t need to look very far to find horrifying examples of AI image abuse:

- BBC – Pupils using AI to create pornographic images of teachers.

- Wired – The deepfake nudes crisis in schools.

- The Guardian – The rise of deepfakes in schools.

In January 2026, the Tech Transparency Project reported that it had found 55 apps on Google Play and 47 on Apple’s App Store that could digitally remove or alter clothing in images. According to the investigation, those apps had been downloaded more than 705 million times and generated around $117 million in revenue.

This is not hidden away in some obscure corner of the internet or the dark web. Tools capable of image-based abuse have been available through mainstream app stores, despite those stores having rules that should prevent this type of content.

The law is tightening, including around non-consensual intimate deepfakes and tools designed to create this kind of abuse. But legislation alone will not remove the risk. Where there is demand, there will always be attempts to find workarounds. Whilst the majority of the apps reported by the Tech Transparency Project have been removed, there are plenty of websites and AI models freely or cheaply available to produce this type of content. That is why schools still need education, policy, governance and proportionate risk assessment

What can we do?

There are two extremes:

- Remove all images and never publish again.

- Do nothing.

Neither of these are viable options, we need something in-between, proportionate and appropriate, which means schools should consider a review and risk-assessment. Schools already have policies in place for image taking and use, this will already cover things such as use of full name, school uniform/logo on display, pre-checking consent or opt-out where applicable before publishing and much more. Is this policy up to date and does it reflect the emerging concerns? For example:

Education and Training

Everyone needs to understand the risks and emerging concerns when it comes to AI image misuse: children, young people, all school staff, governors/trustees, parents and caregivers.

Consent:

Do we need to reassess consent and opt-out processes? In other words do parents/caregivers/pupils really understand the emerging risks and increasing concerns in this generative AI world? As per education and training above do they have the right information/education to make an informed decision?

Taking and using images:

- Does the camera/device you use embed exif data such as geolocation? Can this be turned off or can the exif data be removed?

- Are images close-ups, e.g. portraits? Could you use images of students further away, meaning they are much lower resolution when enlarged?

- Can you take images where faces are side-on or the backs of heads?

- Could you tell the same story another way such as displays, hands-on activities or graphics?

- Could you hide some images behind a password-protected web page, particularly if these are for the viewing of parents only?

Should schools remove images of pupils from their websites?

Not necessarily. It depends on the purpose of the image, the level of identification, the context, the consent given, and the safeguards in place.

At the very least a conversation and review needs to take place. That means reviewing policies, consent and opt-out processes, website images, social media use, image retention and education/training for all.

This is not scaremongering, it is a real and growing safeguarding issue. Children, young people and members of staff are already becoming victims of AI-enabled image abuse. Schools don’t need to panic, but doing nothing is not an option.

Straight to your Inbox

Get the most important updates delivered to your inbox every Wednesday morning including:

- Emerging risks & issues.

- New free curriculum resources.

- New research and insights.

- Links to information for your parents.

- No spam, your information is not shared with anyone else.